Beginners Guide to Multitenant Architecture and RAC

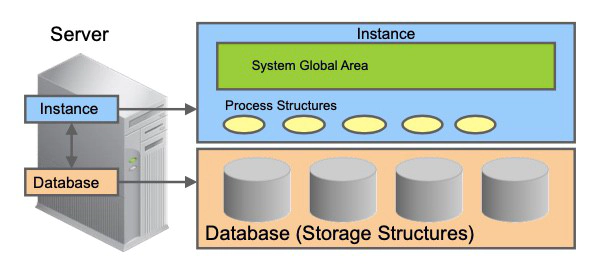

Non-CDB Architecture

In Oracle Database 12c, there are two possible configurations, non-CDB and multitenant container database. In Oracle 11g database, the only kind of database that is supported is a non-CDB. The old architecture is referred to as the non-CDB architecture—the term non-CDB will be used as a shorthand for an occurrence of a pre-12.1 database that uses the pre-12.1 architecture—that requires its own instance and, therefore, its own background processes, and memory allocation for the SGA. It also needs to store the Oracle metadata in its data dictionary. The database administrator can still create Oracle 12c non-CDBs with the same pre12.1 architecture. These databases are non-CDB

If there are multiple databases on the same server, then there is a separate and distinct instance for each database, non-CDB or CDB. An instance cannot be shared between a non-CDB and CDB. When you have to administer small departmental database applications, you have to create as many databases as applications and, therefore, multiply the number of instances, consequently the number of background processes, memory allocation for the SGAs, and provision enough storage for all data dictionaries of these databases.

When you need to upgrade your applications to a new version, you have to upgrade each database, which is time-consuming for the DBA.

Multitenant Architecture: Benefits

Consolidating many non-CDB databases onto a single platform reduces instance overhead, avoids redundant copies of data dictionaries, and consequently storage allocation, and benefits from fast provisioning, time saving upgrading, better security through separation of duties and application isolation. The new 12c multitenant architecture that consolidates databases together is a multitenant container database or CDB, and a database consolidated within a CDB, a pluggable database or PDB.

DBA resource costs are reduced with:

- No application change and very fast provisioning: A new database can be provisioned very quickly. A clone of a populated database can be created very quickly. A populated database can be quickly unplugged from its CDB on one platform and quickly plugged into a CDB on a different platform. A non-CDB can quickly be plugged into a CDB.

- Fast upgrade and patching of the Oracle Database version: The cost (time taken and human effort needed) to upgrade many PDBs is the cost of upgrading a single Oracle Database occurrence. You can also upgrade a single PDB by unplugging it and plugging it into a CDB at a different Oracle Database version

The multitenant architecture maintains:

- Secure separation of duties: The administrator of an application can do all the required tasks by connecting to the particular PDB that implements its back end. However, someone who connects to a PDB cannot see other PDBs. To manage PDBs as entities (for example, to create or drop or unplug or plug one), the system administrator needs to connect to the CDB. For these specific tasks, new privileges need to be granted.

- Isolation of applications that may not be achieved manually unless using Database Vault for example. A good example of isolation is dictionary separation enabling Oracle Database to manage the multiple PDBs separately from each other and from the CDB itself.

CDB in a Non-RAC Environment

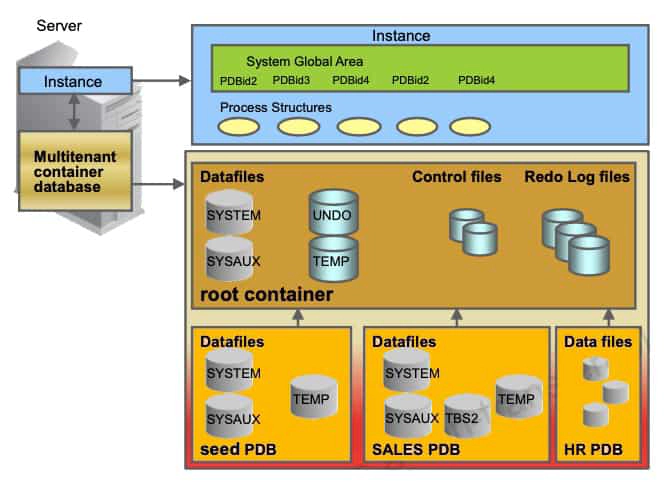

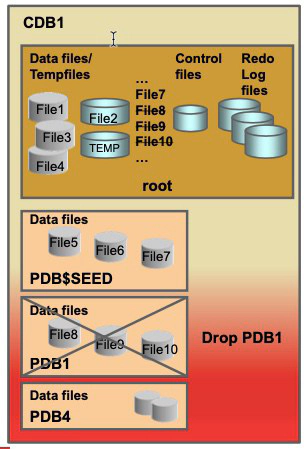

The graphic above shows a CDB with four containers: the root, the seed, and two PDBs. The two applications use a single instance and are maintained separately. At the physical level, the CDB has a database instance and database files, just as a non-CDB does.

- The redo log files are common for the whole CDB. The information it contains is annotated with the identity of the PDB where a change occurs. Oracle GoldenGate is enhanced to understand the format of the redo log for a CDB. All PDBs in a CDB share the ARCHIVELOG mode of the CDB.

- The control files are common for the whole CDB. The control files are updated to reflect any additional tablespace and data files of plugged PDBs.

- The UNDO tablespace is common for all containers in the database instance.

- A temporary tablespace common to all containers is required. But each PDB can hold its own temporary tablespace for its own local users.

- Each container has its own data dictionary stored in its proper SYSTEM tablespace, containing its own metadata, and a SYSAUX tablespace.

- The PDBs can create tablespaces within the PDB according to application needs.

- Each datafile is associated with a specific container, named CON_ID.

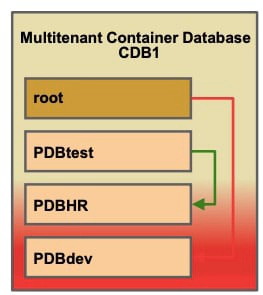

Containers

A CDB is an Oracle database that contains the root, the seed, and zero to n PDBs. What is a PDB in a CDB? A PDB is the lower part of the horizontally partitioned data dictionary plus the user’s quota-consuming data. A non-CDB cannot contain PDBs.

The multitenant architecture enables an Oracle database to contain a portable collection of schemas, schema objects, and nonschema objects that appear to an Oracle Net client as a separate database. For the PDBs to exist and work, the CDB requires a particular type of container, the root container, generated at the creation of the CDB. The root is a system-supplied container that stores common users, which are users that can connect to multiple containers, and system-supplied metadata and data. For example, the source code for system-supplied PL/SQL packages is stored in the root. If the root container exists, you can create containers of the other type, the PDB.

There is only one seed PDB in a CDB. The seed PDB is a system-supplied template that is used to create new PDBs. A CDB can contain up to 4096 PDBs, including the CDB seed, with the services being limited to 10000. The V$CONTAINERS view displays all containers including the root.

Terminology

There are three types of database administrators.

-

In a non-CDB, the DBA is responsible for all administrative tasks at the database level.

-

In a CDB, there are two levels of administration:

- A CDBA is responsible for administering the CDB instance and the root container.

- A PDBA is responsible for administering its own PDB.

There is a new terminology for new entities.

-

Common vs local:

- Common users/roles versus local users/roles: A common user is a user that has the same username and authentication credentials across multiple PDBs, unlike the local user which exists in only one PDB. A local user is a traditional user, created in a PDB and known only in its own PDB. A role created across all containers is a common role. A role created in a specific PDB is local.

- Common privileges versus local privilege: A privilege becomes common or local based on the way it is granted. A privilege granted across all containers is a common privilege. A privilege granted in a specific PDB is local.

-

CDB vs PDB level:

- CDB resource management works at CDB level, and PDB resource management at PDB level.

- Unified audit policies can be created at CDB level and PDB level.

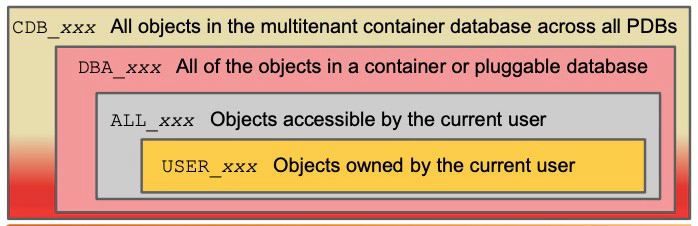

Data Dictionary Views

CDB_pdbs: All PDBs within CDB CDB_tablespaces: All tablespaces within CDB CDB_users: All users within CDB (common and local)

DBA dictionary views providing information within CDB:

SQL] SELECT view_name FROM dba_views WHERE view_name like 'CDB%';

DBA dictionary views providing information within PDB:

SQL] SELECT table_name FROM dict WHERE table_name like 'DBA%';

For backward-compatibility, DBA views show the same results in a PDB as in a non-CDB: DBA_OBJECTS shows the objects that exist in the PDB from which you run the query. This implies, in turn, that although the PDB and the root have separate data dictionaries, each data dictionary view in a PDB shows results fetched from both of these data dictionaries. The DBA_xxx views in the root show, even in a populated CDB, only the Oracle-supplied system —as is seen in a freshly created non-CDB. This is another advantage of the new architecture.

To support the duties of the CDB administrator, a new family of data dictionary views is supported with names such as CDB_xxx. Each DBA_xxx view has a CDB_xxx view counterpart with an extra column, Con_ID, which shows from which container the listed facts originate. Query the CDB_xxx views from the root and from any PDB. The CDB_xxx views are useful when queried from the root because the results from a particular CDB_xxx view are the union of the results from the DBA_xxx view counterpart over the root and all currently open PDBs. When a CDB_xxx view is queried from a PDB, it shows only the information that it shows in its DBA_xxx view counterpart. If you connect to the root and query CDB_USERS, you get the list of users, common and local, of each container. Now if you query DBA_USERS, you get the list of common users (you are aware that in the root, only common users exist). Now if you connect to a PDB, and query CDB_USERS or DBA_USERS, you get the same list of users, common and local, of the PDB.

The same backward-compatibility principle applies also to each of the familiar v$views.

Connection to a Non-RAC CDB

When you connect to a CDB, you either want to connect to the root or to a specific PDB. Any container in a CDB owns a service name.

- The root container service name is the CDB name given at the CDB creation concatenated with a domain name.

- Each new PDB is assigned a service name: the service name is the PDB name given at PDB creation concatenated with a domain name. If you create or plug a PDBtest PDB, its service name is PDBtest concatenated with a domain name. The container service names must be unique within a CDB, and even across CDBs that register with the same listener.

- You can find service names maintained in a CDB and PDBs in the CDB_SERVICES or V$SERVICES views. The PDB column shows the PDB to which the services are linked.To connect to the root, use local OS authentication or the root service name. For example, if you set the ORACLE_SID to the CDB instance name and use the command CONNECT / AS SYSDBA, you are connected to the root as a SYS user with common system privileges to manage and maintain all PDBs.

With the service name, you can use the EasyConnect syntax or the alias from tnsnames.ora. Using EasyConnect, you would enter the following connect string:

SQL> CONNECT username@"hostname:portnumber/service_name"

SQL> CONNECT username@"localhost:portnumber/service_name"

Using the tnsnames.ora file, you would enter the following connect string:

SQL CONNECT username@"localhost:net_service_name

To connect to a desired PDB, use either EasyConnect or the alias from the tnsnames.ora file.

Switching Connection

A CDB administrator can connect to any container in the CDB using either CONNECT or ALTER SESSION SET CONTAINER to switch between containers. For example, the CDB administrator can connect to root in one session, and then in the same session switch to the PDBHR container. The requirement here being a common user known in both containers, and a system privilege SET CONTAINER.

1. Using the command CONNECT allows connections under common or local users.

SQL> CONNECT / AS SYSDBA

SQL> CONNECT local_user1@PDBdev

2. Using ALTER SESSION SET CONTAINER allows connections only under a common user who is granted the new system privilege SET CONTAINER. Ensure that the AFTER LOGON trigger does not fire. Transactions that are neither committed, nor rolled back in the original container are still in a pending state while switching to another container and when switching back to the original container.

SQL> CONNECT sys@PDBtest AS SYSDBA

SQL> ALTER SESSION SET CONTAINER=PDBHR;

SQL> SHOW CON_NAME

SQL> ALTER SESSION SET CONTAINER=CDB$ROOT;

Oracle RAC and Multitenant Configuration

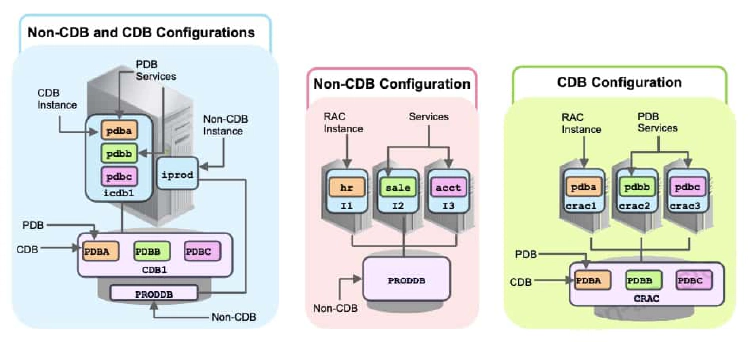

In Oracle Database 12c, there are now three possible configuration options:

- Non-CDB: The old Oracle Database 11g architecture

- Single-tenant configuration: The special case of the new architecture, which does not require the licensed option (Oracle Multitenant option)

- Multitenant configuration: Typically more than one pluggable database (PDB) per multitenant container database (CDB), but can hold zero, one, or many PDBs at any one time, taking advantage of the full capabilities of the new architecture, which requires the licensed Oracle Multitenant option

Which are the possible instance/database configurations in Oracle Database 12c?

- In a non-RAC environment, each database instance can be associated with only one non-CDB or CDB.

- In a RAC environment, several instances can be associated with a non-CDB or CDB.

- An instance is associated with an entire CDB.

A RAC CDB, exactly as a RAC non-CDB, contains:

- One UNDO tablespace per instance in shared undo mode.

- In local undo mode, every container in the CDB uses local undo

- At least two groups of redo log files per instance

- Internal services at CDB level: Like in a RAC non-CDB, SYS$BACKGROUND is used by background processes only. SYS$USERS is the default service for user sessions that are not associated with any application service. Both internal services support all the workload management features and neither one can be stopped or disabled. A special Oracle CDB service is created by default for the Oracle RAC CDB with the same name as that of the CDB.

With the multitenant architecture, each container is exposed as a service. Each PDB and the root are assigned a database service when the container is created.

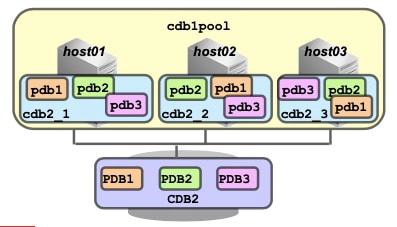

With clusterware policy-managed databases, each PDB service can be exposed across all RAC instances, across a subset of instances, or on a single instance (as shown in the slide). PDB Services can be associated with server pools. Because any server in the server pools within the cluster can run any of the CDBs, you do not have to create and maintain CDB instance-to-node-name mappings or PDB service-to-node-name mappings.

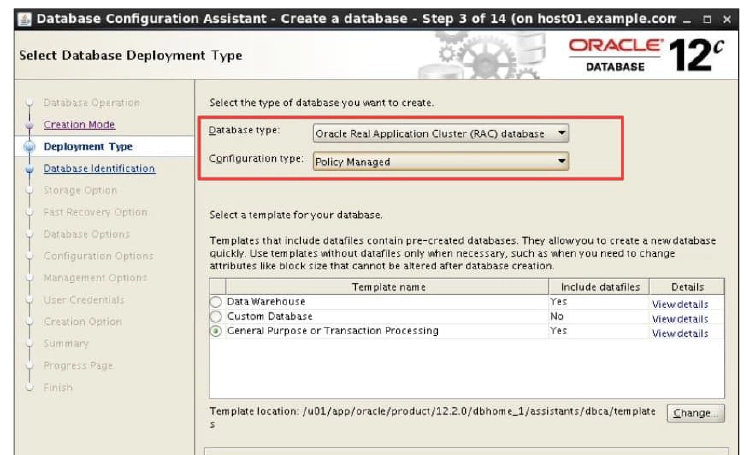

Creating a RAC CDB

When creating a RAC CDB with DBCA, define the type of database, like you do when you create a RAC non-CDB:

- An Oracle single instance database

- An Oracle Real Application Clusters (RAC) database

- An Oracle RAC One Node database

In the same step, choose, like you do when you create a RAC non-CDB, between two types of management styles:

- Policy-Managed

- Admin-Managed

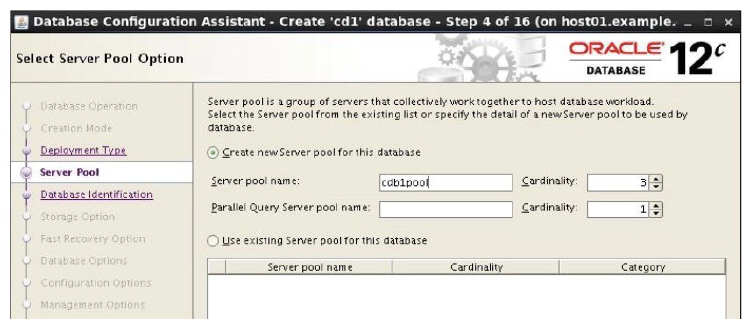

Hosting a RAC CDB in Server Pools

Because policy-managed configuration was selected, the server pool to host the new CDB must be defined. Choose between using an existing server pool or creating a new one and specifying the detail of the new server pool to be used by the CDB.

Policy-managed deployments facilitate consolidation. In the case of schema consolidation, where multiple applications are being hosted in a single database (CDB) separated into PDBs, since server pools determine which services run together or separately, you can configure and maintain required affinity or isolation of PDB services.

Managing a policy-managed database requires less configuration and reconfiguration steps than an administrator-managed one with respect to creation, sizing, patching, and load balancing. Also, because any server in the server pools within the cluster can run any of the CDB instances and PDB services, you do not have to create and maintain CDB instance-to-node-name mappings and PDB service-to-node-name mappings.

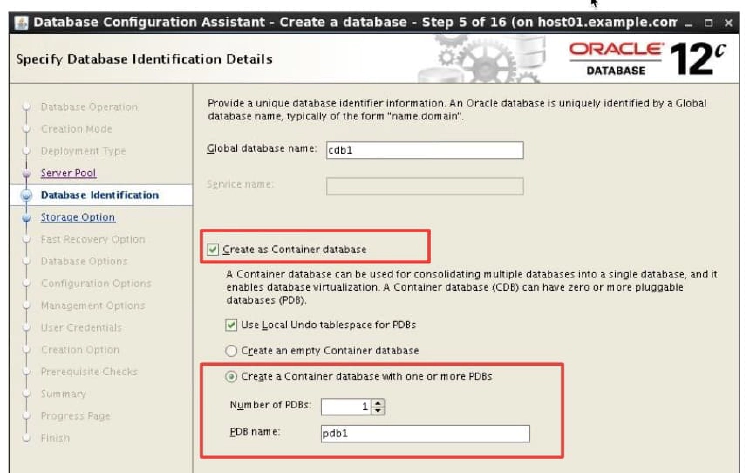

Creating a RAC CDB Including PDBs

Select the “Create As Container Database” check box to create the database as a CDB (otherwise, the database is created as a non-CDB). Starting with 12cR2, a CDB can run in local undo mode or shared undo mode. Local undo mode means that every container in the CDB uses local undo. Shared undo mode means that there is one active undo tablespace for a single-instance CDB. For an Oracle RAC CDB, there is one active undo tablespace for each instance.

Provide a pluggable database (PDB) name when using the “Create a database with default configuration” option. If the “Advanced Mode” option is selected (as shown in the slide), an empty CDB can be created with only the root and seed containers or a CDB can be created with one or several PDBs. If the CDB contains only one PDB, don’t provide an existing PDB name of another CDB. If the CDB contains several PDBs, a suffix is requested to generate the PDB names.

In Advanced Mode, the CDB can be registered with Enterprise Manager Cloud Control or configured for Enterprise Manager Database Express. The passwords for the SYS and SYSTEM users can also be set.

After CDB Creation

1. After the CDB is created, use SRVCTL to verify that there are as many CDB instances running in the server pool as nodes defined in the server pool.

$ srvctl status database -db cdb2

Instance cdb2_1 is running on node host01

Instance cdb2_2 is running on node host02

Instance cdb2_3 is running on node host03

2. The UNDO tablespaces are created in the root container and there are as many UNDO tablespaces as CDB instances.

$ export ORACLE_SID=cdb2_1

$ sqlplus / as sysdba

SQL> SELECT tablespace_name, con_id FROM cdb_tablespaces WHERE contents='UNDO';

TABLESPACE_NAME CON_ID

------------------------------ ----------

UNDOTBS1 1

UNDOTBS2 1

UNDOTBS3 1

3. The groups of redo log files are displayed with the V$LOGFILE view:

SQL> select group#, con_id from v$logfile;

GROUP# CON_ID

---------- ----------

2 0

1 0

5 0

6 0

3 0

4 0

Note that there are six redo log groups because there are three CDB instances. Note also that the container ID is 0, referring to the CDB, whereas the container ID is 1 for the data files of the UNDO tablespaces referring to the specific root container.

Connecting Using CDB/PDB Services

Use LSNRCTL to verify that there are as many CDB instances running on the nodes of the server pool as nodes in the server pool, and as many PDB services as PDBs managed by the CDB.

$ lsnrctl status

<pre><code>

Service "cdb2" has 1 instance(s). <strong>-> CDB service</strong>

Instance "cdb2_3", status READY, has 1 handler(s) for this

service... <strong>-></strong> One of the three CDB instances

Service "pdb2" has 1 instance(s). <strong>-></strong> PDB service

Instance "cdb2_3", status READY, has 1 handler(s) for

this service... <strong>-></strong> Attached to one of the three CDB instances

</code></pre>

To connect to the root container to perform CDB administrative tasks, use the CDB service. To connect to a PDB to perform PDB administrative tasks, use one of the PDB services. Either use an EasyConnect string or a net service name declared in the tnsnames.ora file as shown in the examples below.

\- The services for the root and each PDB are started on each node of the server pool: PDB2 can be accessed on any CDB instance.

$ lsnrctl status … Service “pdb2” has 1 instance(s). Instance “cdb2_3”, status READY, has 1 handler(s) for this service…

\- To connect to the root container on one of the three CDB instances:

SQL> connect system@“host01/cdb2” ### for root

\- To connect to a PDB on one of the three CDB instances:

SQL> connect system@“host01/pdb2” ### for PDB

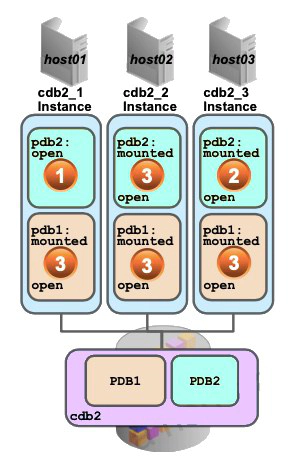

## Opening a PDB in a RAC CDB

When the CDB is started with SRVCTL, the following operations occur:

- The CDB instances are started on all nodes of the server pool.

- The CDB control files are opened for the instances: the root and PDB containers are mounted.

- The root is opened in READ WRITE mode for all instances, the seed is opened in READ ONLY mode for all instances, and the PDBs remain mounted (closed).

To open one or several PDBs on one or some or all of the CDB instances, connect to the root of any CDB instance and issue an ALTER PLUGGABLE DATABASE OPEN statement, specifying the following clauses:

- The INSTANCES clause to modify the state of the PDB in the specified Oracle RAC instances. If you omit this clause, then the state of the PDB is modified only in the current instance as shown in the first example of the slide where the pdb2 PDB is opened in the cdb2_1 instance on host01, but remains mounted in the other two CDB instances. Use instance_name to specify one or more instance names in a comma-separated list enclosed in parentheses to modify the state of the PDB in those instances as shown in the second example of the slide where the pdb2 PDB is opened in the cdb2_3 instance on host03, but remains mounted in the cdb2_2 instance.

- Specify ALL to modify the state of the PDB in all instances. Specify ALL EXCEPT to modify the state of the PDB in all instances except the specified instances. If the PDB is already open in one or more instances, then you can open it in additional instances, but it must be opened in the same mode as in the instances in which it is already open. This operation opens the data files of the PDBsand provides availability to users.

Use the open_mode column from the V\$PDBS view to verify that all PDBs are in READ WRITE open mode except the seed being still in READ ONLY open mode. You can also open a PDB while connected as SYSDBA within the PDB. In this case, it is not necessary to name the PDB to open.

### Summary of commands

1\. Start the CDB instances.

When the CDB is started with SRVCTL, the following operations occur:

- The CDB instances are started on all nodes of the server pool.

- The CDB control files are opened for the instances: the root and PDB containers are mounted.

- The root is opened in READ WRITE mode for all instances, the seed is opened in READ ONLY mode for all instances, and the PDBs remain mounted (closed).

To open one or several PDBs on one or some or all of the CDB instances, connect to the root of any CDB instance and issue an ALTER PLUGGABLE DATABASE OPEN statement, specifying the following clauses:

- The INSTANCES clause to modify the state of the PDB in the specified Oracle RAC instances. If you omit this clause, then the state of the PDB is modified only in the current instance as shown in the first example of the slide where the pdb2 PDB is opened in the cdb2_1 instance on host01, but remains mounted in the other two CDB instances. Use instance_name to specify one or more instance names in a comma-separated list enclosed in parentheses to modify the state of the PDB in those instances as shown in the second example of the slide where the pdb2 PDB is opened in the cdb2_3 instance on host03, but remains mounted in the cdb2_2 instance.

- Specify ALL to modify the state of the PDB in all instances. Specify ALL EXCEPT to modify the state of the PDB in all instances except the specified instances. If the PDB is already open in one or more instances, then you can open it in additional instances, but it must be opened in the same mode as in the instances in which it is already open. This operation opens the data files of the PDBsand provides availability to users.

Use the open_mode column from the V\$PDBS view to verify that all PDBs are in READ WRITE open mode except the seed being still in READ ONLY open mode. You can also open a PDB while connected as SYSDBA within the PDB. In this case, it is not necessary to name the PDB to open.

### Summary of commands

1\. Start the CDB instances.

host01 $ srvctl start database -db cdb2

2\. Open a PDB in the current instance:

SQL> CONNECT sys@host01/cdb2 AS SYSDBA SQL> ALTER PLUGGABLE DATABASE pdb2 OPEN;

3\. Open a PDB in some instances:

SQL> CONNECT sys@host03/cdb2 AS SYSDBA SQL> ALTER PLUGGABLE DATABASE pdb2 OPEN INSTANCES = (‘cdb2_3’);

4\. Open a PDB in all instances:

SQL> ALTER PLUGGABLE DATABASE ALL OPEN INSTANCES=ALL;

## Closing a PDB in a RAC CDB

When all instances of a CDB are shut down, the data files of the root and seed containers and all PDBs are closed, all the control files are closed, and the instances shut down. A PDB can be closed with the INSTANCES clause as shown in the slide. In the first example with the ALTER PLUGGABLE DATABASE command, the PDB is closed in the current instance of the CDB. In the second example, the PDB is closed in the cdb2_3 instance of the CDB. In the last example, the PDB is closed in all the CDB instances.

If you need to close all PDBs in all the CDB instances but keep the root opened for maintenance purposes, use the following command:

ALTER PLUGGABLE DATABASE ALL CLOSE INSTANCES = ALL;

When the PDB is closed on all the CDB instances, the data files of the PDB are closed. To display the PDB open mode, use the V\$PDBS view OPEN_MODE column in each of the CDB instances. It may display MOUNTED in some of the instances and READ WRITE in other instances.

To instruct the database to reopen the PDB on a different Oracle RAC instance, use the RELOCATE clause. Specify RELOCATE to reopen the PDB on a different instance that is selected by Oracle Database or RELOCATE TO 'instance_name' to reopen the PDB in the specified instance.

### Summary of commands

1\. Shut down the instances of a RAC CDB:

host01 $ srvctl stop database -db cdb2

2\. Close a PDB:

a. In the current instance only:

SQL> CONNECT sys@host01/cdb2 AS SYSDBA SQL> ALTER PLUGGABLE DATABASE pdb2 CLOSE;

b. In some or all instances of the CDB:

SQL> ALTER PLUGGABLE DATABASE pdb2 CLOSE INSTANCES=(‘cdb2_3’); SQL> ALTER PLUGGABLE DATABASE pdb2 CLOSE INSTANCES = ALL;

c. In the current instance and reopen it in another instance:

SQL> ALTER PLUGGABLE DATABASE pdb2 CLOSE RELOCATE TO ‘cdb2_3’;

## Types of Services

There are two types of services:

1\. **Internal services**: The RDBMS supports two internal services. SYS$BACKGROUND is used by the background processes only. SYS$USERS is the default service for user sessions that are not associated with any application service. Both internal services support all the workload management features and neither one can be stopped or disabled.

2\. **Application services**: CDB and PDB services. - A special Oracle CDB service is created by default for the Oracle RAC CDB. This default service is always available on all instances in an Oracle RAC environment, unless an instance is in restricted mode. This service is useful to connect on another node instance than the instance the session is connected to. - Each PDB is assigned a default service. The name of the service is the PDB name. The service is the only method to connect to a PDB.

## Managing Services

There are two categories of services to manage:

- **Default PDB services**: Each new PDB is assigned a default service. The name of the service is the PDB name. This service is started when the PDB is opened. When the CDB is opened and an AFTER STARTUP ON DATABASE trigger triggers an ALTER PLUGGABLE DATABASE ALL OPEN, the services are started.

- **Dynamic PDB services**: Assign one dynamic database service to each PDB to coordinate start, stop, and placement of PDBs across instances in a RAC CDB, using SRVCTL. When a dynamic PDB service is started by the clusterware, the PDB is automatically opened. There is no need to create an AFTER STARTUP ON DATABASE trigger to open the PDB. Because PDBs can be managed using dynamic services, typical Oracle RAC-based management practices apply. So, if a PDB service is in the ONLINE state when Oracle Clusterware is shut down on a server hosting this service, then the service will be restored to its original state after the restart of Oracle Clusterware on this server. This way, opening PDBs is automated as with any other Oracle RAC database.

## Affinitizing PDB Services to Server Pools

Policy-managed databases facilitate the management of services, because the services are assigned to a single server pool and run as singletons or uniform across all servers in the pool. You no longer have to create or maintain explicit preferred and available CDB instance lists for each PDB service. If a server moves into a server pool because of manual relocation or a high availability event, all uniform PDB services in the CDB instances are automatically started. If a server hosting one or more singleton services goes down, those services will automatically be started on one or more of the remaining servers in the server pool

To assign a PDB service to a CDB, use SRVCTL as shown in the example in the slide using the **–CARDINALITY UNIFORM** parameter. The PDB service will be uniformly managed across all nodes in the server pool as shown in the image below. In this case, each PDB service is available in each of the CDB instances.

host01$ srvctl add service -db cdb2 -pdb pdb1 –service pdb1srv -policy automatic -serverpool cdb1pool -cardinality uniform

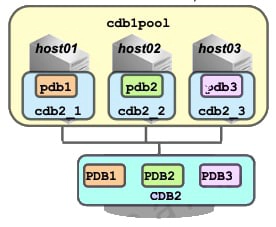

If you want to have the PDB service running as a singleton service in the same server pool, use the **–CARDINALITY SINGLETON** parameter as shown in the image below. In this case, the pdb1 service is available in one of the CDB instances.

If you want to have the PDB service running as a singleton service in the same server pool, use the **–CARDINALITY SINGLETON** parameter as shown in the image below. In this case, the pdb1 service is available in one of the CDB instances.

host01$ srvctl add service -db cdb2 -pdb pdb1 –service pdb1srv -policy automatic -serverpool cdb1pool -cardinality singleton

Once the PDB service is created, start the service using SRVCTL.

Once the PDB service is created, start the service using SRVCTL.

host01$ srvctl start service -db cdb2 -service pdb1srv

If the pdb1 service is in the ONLINE state when Oracle Clusterware is shut down on a server hosting this service, then the service will be restored to its original state after the restart of Oracle Clusterware on this server.

## Adding a PDB to a RAC CDB

To create a new PDB from the seed (the template PDB), connect to the root as a common user with the CREATE PLUGGABLE DATABASE system privilege and execute the CREATE PLUGGABLE DATABASE statement as shown below. The FILE_NAME_CONVERT clause designates first the source directory of the seed datafiles and second the destination directory for the new PDB datafiles.

SQL> CREATE PLUGGABLE DATABASE pdb1 2 ADMIN USER admin1 IDENTIFIED BY p1 ROLES=(CONNECT) 3 FILE_NAME_CONVERT = (‘PDB$SEEDdir’, ‘PDB1dir’);

To create a new PDB from another PDB, connect to the root as a common user with the CREATE PLUGGABLE DATABASE system privilege and execute the CREATE PLUGGABLE DATABASE statement as shown below. Before proceeding with the CREATE PLUGGABLE DATABASE statement, the source PDB needs to be in READ ONLY mode in all CDB instances.

SQL> CREATE PLUGGABLE DATABASE pdb3 FROM pdb1;

When the statement completes, open the PDB in the required CDB instances. This starts the default service created for the new PDB in the CDB instances.

## Dropping a PDB from a RAC CDB

1\. Remove the dynamic PDB services.

1\. Remove the dynamic PDB services.

host01$ srvctl remove service -db cdb1 -service mypdb1serv

2\. Close the PDB in all instances.

SQL> ALTER PLUGGABLE DATABASE pdb1 CLOSE INSTANCES=ALL;

3\. Drop the PDB.

SQL> DROP PLUGGABLE DATABASE pdb1 [INCLUDING DATAFILES];

When you no longer need the data in a PDB, you can drop the PDB. When you drop a PDB specifying **INCLUDING DATAFILES**, all of its datafiles listed in the control files are deleted. With or without the clause INCLUDING DATAFILES, the DROP PLUGGABLE DATABASE statement modifies the control files to eliminate all references to the dropped PDB.

**KEEP DATAFILES** is the default behavior to keep the data files, useful in scenarios where an unplugged PDB is plugged into another CDB or replugged into the same CDB. Because the dynamic PDB services are not removed, remove them before dropping the PDB.